No products in the cart.

By Maya Chen · March 17, 2026 · AI & Machine Learning

NVIDIA GTC 2026: Jensen Huang’s $1 Trillion Vision, Vera Rubin, and the Birth of the AI Agent OS

Jensen Huang’s GTC 2026 keynote was the most consequential AI infrastructure announcement in years. Here’s everything that matters — from the Vera Rubin platform to the OpenClaw agent OS, the Groq acquisition, and a $1 trillion compute demand forecast that sounds impossible until you do the math.

Vera Rubin: 3.6 Exaflops

$1T Compute Demand

35x Throughput/MW (Groq+VR)

18M Vehicles/yr on RoboTaxi

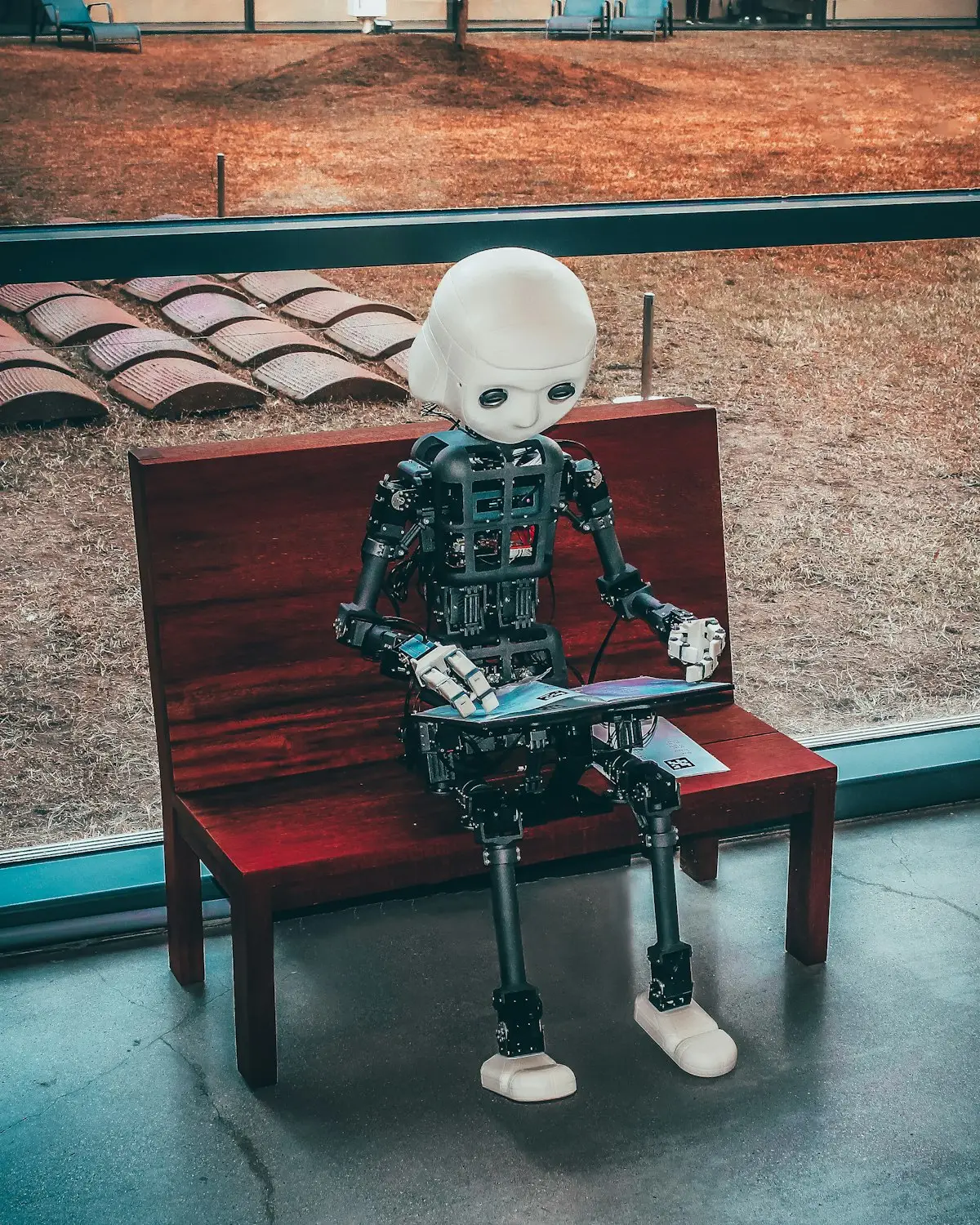

110 Robots on Show Floor

$1T Compute Demand

35x Throughput/MW (Groq+VR)

18M Vehicles/yr on RoboTaxi

110 Robots on Show Floor

Key Insight

“The AGI era is here.” — Jensen Huang, GTC 2026. With Vera Rubin delivering 3.6 exaflops, OpenClaw redefining how AI agents coordinate, and demand projections of $1 trillion, NVIDIA isn’t just selling GPUs anymore — it’s architecting the operating system of artificial general intelligence.

The Vera Rubin Number: What 3.6 Exaflops Actually Means

Named after the pioneering astronomer who discovered dark matter’s gravitational signature, the Vera Rubin GPU platform is NVIDIA’s most ambitious silicon in history. When Jensen Huang announced 3.6 exaflops of AI compute in a single rack-scale system, the number seemed almost fictional — but the engineering behind it is deeply real.

To put 3.6 exaflops in context: the entire internet’s annual data traffic is roughly 5 exabytes. The Vera Rubin system performs 3.6 quintillion floating-point operations every second. According to SemiAnalysis, that translates to 50x more tokens-per-watt than the Hopper H200 — not an incremental gain but a generational leap that fundamentally changes what’s economically feasible to run.

The architecture behind these numbers is just as impressive as the headline figure. Vera Rubin integrates 7 chip types across 5 rack-scale computer configurations, all connected via NVLink with 260 TB/s all-to-all bandwidth. That bandwidth number matters as much as the compute figure: it means GPUs can share working memory at near-zero latency, enabling model parallelism at scales that previous interconnect speeds made impractical.

The cooling breakthrough is equally significant. Vera Rubin is 100% liquid-cooled at 45°C — a tighter thermal window than previous generations — and NVIDIA cut installation time from 2 days to 2 hours through modular design. For hyperscalers, that’s not a convenience feature: it means deploying 50-rack AI factories in days rather than weeks, compressing the time from capital expenditure to revenue generation.

Jensen’s framing of the compute demand side was equally provocative: reasoning AI models increase individual workload compute by approximately 10,000x compared to standard inference. Multiply that by the number of users, and NVIDIA’s claim of total AI compute demand rising ~1 million times in just two years becomes mathematically defensible rather than hyperbolic.

The Groq Acquisition: Inference Changes Everything

The most strategically significant announcement buried in the GTC keynote was NVIDIA’s acquisition of Groq — the Language Processing Unit (LPU) startup that had built perhaps the fastest inference chip in the world. Groq’s architecture, optimized entirely for decode-phase inference rather than training, represented a direct challenge to NVIDIA’s GPU dominance in the deployment half of the AI stack.

Rather than compete with Groq, NVIDIA acquired them and combined their capabilities. The result is remarkable: pairing the Groq 3 LPX chip (which excels at inference decode) with the Vera Rubin GPU via NVIDIA Dynamo software yields 35x throughput per megawatt versus Blackwell alone. That’s not an optimization — that’s a different cost curve entirely for the inference half of AI workloads.

Why does inference efficiency matter so much? Because training a model happens once, but inference happens billions of times per day. As reasoning models become the norm — with chains of thought that consume 10,000x more compute per query than standard inference — the economics of serving those queries will determine which AI deployments are profitable. NVIDIA just made their stack the most energy-efficient option at scale.

The acquisition also signals something about NVIDIA’s strategy: they’re not content to dominate training compute and cede inference to specialized chips. By absorbing Groq’s LPU technology, NVIDIA now offers a complete inference-to-training stack that competitors cannot easily replicate. This vertical integration is the same playbook that made CUDA dominant over the past 20 years — and the Groq deal extends it into the decode era.

OpenClaw: The AI Agent Operating System

If Vera Rubin is the hardware story of GTC 2026, OpenClaw is the software story — and arguably the more consequential announcement for the next decade of AI development. Jensen Huang called it “the fastest-growing open-source project in history, surpassing Linux’s 30-year adoption in weeks.” That framing, whether literally true or aspirationally ambitious, points to something real about where AI infrastructure is heading.

OpenClaw is an open-source agentic AI framework that provides the essential primitives for multi-agent AI systems: resource management, tool access APIs, LLM connectivity, scheduling, and sub-agent spawning. Think of it as the missing OS layer between raw LLM capabilities and enterprise agentic applications. Where today’s agent frameworks (LangChain, AutoGen, CrewAI) provide workflow orchestration, OpenClaw operates at a lower level — managing the compute resources, tool permissions, and agent lifecycles that those frameworks sit on top of.

The enterprise-grade version, NemoClaw, is NVIDIA’s reference design built on OpenClaw with three layers of security architecture: OpenShell runtime sandboxing (which isolates agents from sensitive system resources), a privacy router (which screens data before it reaches external LLM APIs), and network guardrails (which enforce policy constraints on agent-to-agent communication). This security stack addresses the most common objection enterprises raise about agentic AI: “What prevents an agent from doing something it shouldn’t?”

The strategic implication is significant: if OpenClaw becomes the de facto standard for agentic AI infrastructure — the way Linux became the de facto OS for servers — then NVIDIA’s position in the AI stack extends far beyond silicon. Every enterprise running agentic AI workloads on OpenClaw creates a gravitational pull toward NVIDIA hardware optimized for OpenClaw’s runtime. This is CUDA strategy applied to the agent era.

CUDA’s 20th anniversary at GTC 2026 was a poignant backdrop for this announcement. Twenty years ago, CUDA looked like an academic curiosity. Today it’s the bedrock of a multi-trillion-dollar industry. Jensen’s bet is that OpenClaw occupies a similar position for the next twenty years — the invisible infrastructure layer that everything else runs on top of.

The RoboTaxi Bet: 18 Million Vehicles Per Year

NVIDIA’s autonomous vehicle platform expanded dramatically at GTC 2026, with BYD, Hyundai, Nissan, and Geely joining the existing roster of Mercedes, Toyota, and GM. Together, these seven manufacturers represent approximately 18 million vehicles per year — a figure that contextualizes why NVIDIA’s automotive revenue has been the fastest-growing segment of their business.

The technical centerpiece of the expanded AV platform is the Alpamayo model, which gives autonomous vehicles natural language reasoning and narration capabilities. Rather than treating autonomous driving purely as a perception-and-control problem, Alpamayo enables vehicles to reason about ambiguous scenarios in natural language — essentially giving AV systems the same contextual understanding that a human driver uses when navigating an unexpected construction zone or interpreting an unusual traffic pattern.

The Uber partnership announced at GTC adds a commercial distribution layer to the hardware and software stack. NVIDIA provides the compute; Uber provides the deployment network and consumer interface. This is the same division of labor that made AWS successful in cloud computing — the infrastructure provider and the deployment operator playing complementary rather than competitive roles.

What makes the RoboTaxi bet interesting from an infrastructure perspective is the data flywheel it creates. Every mile driven by an NVIDIA-powered autonomous vehicle generates training data that feeds back into improved models, which get deployed to more vehicles. At 18 million vehicles generating data continuously, NVIDIA’s autonomous vehicle platform becomes one of the largest data collection and model improvement operations on the planet.

The Feynman 2028 Roadmap: What Comes After Vera Rubin

NVIDIA’s roadmap transparency is one of their most underrated competitive advantages. By committing to public two-year technology timelines, they allow ecosystem partners — server OEMs, data center operators, software developers — to make capital investment decisions with confidence. The Feynman 2028 roadmap, named after physicist Richard Feynman, extends this visibility into NVIDIA’s next architectural generation.

The Feynman platform encompasses five major technology areas: the LP40 LPU (a next-generation language processing unit building on the Groq integration), the Rosa CPU (NVIDIA’s own processor architecture for AI factory management tasks), the BlueField 5 data processing unit (enhanced networking and storage acceleration), Kyber-CPO optical networking (co-packaged optics for higher-bandwidth, lower-latency interconnects), and the extraordinary Vera Rubin Space-1 — orbital data centers.

The orbital data center concept deserves more attention than it received in the GTC coverage. Vera Rubin Space-1 envisions AI compute infrastructure deployed in low Earth orbit — eliminating land costs, accessing direct solar power without conversion losses, and potentially enabling latency-optimized paths for global AI inference. This is not a near-term product announcement but a serious engineering roadmap item that signals where NVIDIA sees the physical limits of terrestrial AI infrastructure.

NVIDIA DSX (Digital Services Experience) and its Omniverse digital twin technology add another dimension to the Feynman story. DSX allows AI factory designers to simulate their entire infrastructure stack — from rack placement to thermal dynamics to network topology — before deploying a single physical component. DSX MaxQ, the power optimization layer, delivers 2x improvement in tokens-per-watt through software alone, without requiring new hardware.

What GTC 2026 Means for Enterprise AI Strategy

For enterprise technology leaders, the GTC 2026 announcements require a strategic recalibration. The announcements aren’t incremental improvements to existing AI infrastructure — they represent a step-change in what’s feasible and what’s economical to build. Three implications stand out as immediately actionable.

First, the economics of reasoning AI workloads are changing faster than most enterprise AI budgets have anticipated. If reasoning models truly increase per-query compute by 10,000x and Vera Rubin delivers 50x better tokens-per-watt, the net cost trajectory of running advanced AI is actually improving — but only for organizations with access to next-generation infrastructure. Enterprises locked into older hardware generations on multi-year contracts will face a growing competitive disadvantage in the cost of intelligence.

Second, OpenClaw and NemoClaw deserve serious evaluation as enterprise infrastructure standards. If NVIDIA’s prediction about OpenClaw’s adoption velocity is directionally correct, organizations that build proprietary agent frameworks today may face expensive migration costs in 2-3 years. Evaluating OpenClaw’s security model — particularly the three-layer NemoClaw architecture — against enterprise requirements now is lower-cost than rebuilding later.

Third, the open models released at GTC — Nemotron 3, Cosmos 2, Groot 2, Alpamayo, BioNemo, and Earth-2 — represent significant opportunities for organizations in specific verticals. BioNemo for pharmaceutical discovery, Earth-2 for climate and environmental modeling, and Groot 2 for robotics represent state-of-the-art starting points that most enterprise teams couldn’t build internally. Evaluating these models against proprietary alternatives before the next budget cycle is worth prioritizing.

Jensen Huang’s declaration that “the AGI era is here” will be debated by AI researchers for years. But from a practical enterprise standpoint, the debate is already irrelevant. What matters is that AI systems are now capable of autonomous, multi-step reasoning at economically viable cost — and NVIDIA just made that capability an order of magnitude more accessible with every announcement at GTC 2026.

Platform Comparison: Vera Rubin vs Blackwell vs Hopper H200

| Metric | Hopper H200 | Blackwell | Vera Rubin |

|---|---|---|---|

| Rack-Scale AI Compute | ~72 petaflops | ~720 petaflops | 3.6 exaflops |

| Tokens/Watt (relative) | 1x (baseline) | ~5x | 50x |

| NVLink Bandwidth | ~900 GB/s | ~1.8 TB/s | 260 TB/s |

| Cooling System | Air + optional liquid | Liquid-cooled | 100% liquid, 45°C |

| Install Time | 3-5 days | 2 days | 2 hours |

| Chip Types | 2 | 4 | 7 |

Frequently Asked Questions

What is Vera Rubin?

Vera Rubin is NVIDIA’s next-generation AI computing platform, announced at GTC 2026. It delivers 3.6 exaflops of AI compute in a rack-scale configuration, with 260 TB/s NVLink all-to-all bandwidth and 50x better tokens-per-watt efficiency compared to the Hopper H200. It is 100% liquid-cooled and can be installed in 2 hours. The platform is named after astronomer Vera Rubin, who confirmed dark matter’s existence through galaxy rotation curves.

How does OpenClaw compare to Linux?

Jensen Huang’s comparison to Linux was deliberate. Linux took 30 years to become the dominant server OS. Jensen claimed OpenClaw surpassed Linux’s adoption velocity “in weeks” — a statement that’s more aspiration than verified metric, but points to the strategic ambition. Like Linux, OpenClaw is open-source, provides fundamental infrastructure primitives, and is designed to run across diverse hardware. Unlike Linux, it’s purpose-built for multi-agent AI workloads rather than general computing.

What is Alpamayo?

Alpamayo is NVIDIA’s AI model for autonomous vehicles, named after the Peruvian mountain. It enables vehicles to reason about driving scenarios in natural language — essentially giving autonomous cars the contextual understanding to narrate and explain their decisions. It was announced at GTC 2026 as the AI backbone for the expanded RoboTaxi platform, and is deployed across the seven automotive manufacturers that have joined the NVIDIA AV ecosystem.

Why did NVIDIA acquire Groq?

NVIDIA acquired Groq to dominate the inference side of the AI stack. Groq’s Language Processing Unit (LPU) is highly optimized for inference decode — the generation phase of language model output — while NVIDIA’s GPUs excel at training and prefill. By combining the Groq 3 LPX chip with Vera Rubin via the NVIDIA Dynamo software layer, NVIDIA achieved 35x throughput per megawatt versus Blackwell alone. This acquisition extends NVIDIA’s vertical integration across the complete AI workload lifecycle.

What is DLSS 5?

DLSS 5 (Deep Learning Super Sampling 5) is NVIDIA’s latest AI-powered gaming rendering technology. Previous DLSS versions used AI to upscale lower-resolution frames to higher resolutions. DLSS 5 takes a more radical approach: the AI neural network generates entire new frames from scratch — not just upscaled versions of existing frames. This “frame generation” approach delivers dramatically higher framerates, particularly at 4K and 8K resolutions, with NVIDIA claiming it effectively multiplies playable framerate by 4x or more compared to native rendering.

Related Reading

NVIDIA Cosmos: Physical AI Explained — What It Means for Robots and Autonomous Systems

Jensen Huang Says AGI Is Here: What He Actually Means and Why It Matters

OpenClaw & NemoClaw Explained: NVIDIA’s AI Agent OS Architecture Deep Dive

Stay Ahead of AI Infrastructure

Maya Chen covers NVIDIA, AI hardware, and the infrastructure powering the next wave of intelligence. Subscribe for in-depth breakdowns every week.